The other day I was talking with one of the guys at work and while chatting about Photogrammetry an idea came to mind. There are millions of photographs online taken by tourists who are visiting archaeological sites, and famous monuments like the Parthenon or the Pyramids surely have their photograph taken many times a day. Therefore there is an enormous bank of images of archaeology available at our fingertips, and if it is possible to reconstruct features and structures using as little as 20 images, surely there must be a way to reconstruct through Photogrammetry these monuments without the need to even be there.

As a result of this pondering I decided to test this idea by choosing a building that would be simple enough to reconstruct, yet famous enough to allow many images to be found. A few months ago I had started working on the reconstruction of Stone Henge from a series of photographs I had found online, but with scarce (yet useful) results. Hence I decided to try with something solid, like the Great Sphinx.

I then proceeded to find all possible images, collecting approximately 200 of them from all angles. Surprisingly the right side of the monument had many less pictures than the rest of it. I then run the photos in different combinations in an attempt to reconstruct as accurate as a model as possible.

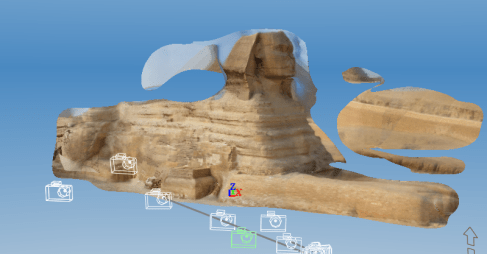

The following is the closest result I have had yet:

Agreed, much more work is needed. But honestly I am surprised that I managed to get this far! The level of detail is quite surprising in some places, and if half of it has worked the other half should also be possible. The matter is finding the right combination of images.

So far I have tried:

- Running only the high quality images.

- Running only the images with camera details.

- Running 20 photos selected based on the angles.

- Creating the face first and then attaching the rest of the body.

- Running only images with no camera details (to see if any results appeared, which they did).

- Increasing the contrast in the images.

- Sharpening the images.

- Manually stitching the remaining images in.

All of these have produced results, but lesser than the one above. Also, I have tried running the images in Agisoft Photoscan with similarly poor results.

The model above was done by pure coincidence by uploading 100 of the images, so I’m hoping by going through the images selected I may be able to find a pattern of selection.

I shall post any updates here, so stay tuned!